Objectivity

The objectivity of a test means that the results obtained with the test are independent of the examiner (Ziegler & Bühner, 2012). A distinction is made between implementation objectivity (the result is independent of the test administrator or the examination situation), evaluation objectivity (the result is independent of the person evaluating it), and interpretation objectivity (different interpreters arrive at the same conclusion based on the results). If objectivity is insufficient, a test may also be invalid, i.e., it may not predict the success of candidates. Computer tests such as those in the Vienna Test System exhibit maximum objectivity in all three types due to the standardized specifications on the computer, automated evaluation, and standardization.

Reliability

The reliability of a test describes the degree of accuracy with which a suitability characteristic is measured (Ziegler & Bühner, 2012). Reliability coefficients can range from 0 to 1, with a higher value indicating greater accuracy. According to the test evaluation guidelines of the European Federation of Psychologists' Associations (EFPA, 2025), reliability values above 0.7 are considered adequate, above 0.8 are considered good, and above 0.9 are considered excellent. However, tests with lower reliability can be used for screening characteristics.

The tests in the Vienna Test System (VTS) all have at least adequate reliability. However, some tests in the VTS that were constructed based on item response theory and are designed as an adaptive test based on large item pools, offer the option of adjusting the reliability by the test administrator.

Criterion validity

Criterion validity means that test values of the respective psychometric test correlate with an external criterion relevant to the aptitude characteristic. For example, the test should be able to predict future professional success (e.g., sales, supervisor evaluations, grades in training tests, etc.), neuropsychological or clinical psychological diagnoses, or athletic performance. The following chapters explain the relationship between psychological tests and relevant external criteria in the areas of aptitude testing, clinical psychological assessments, and sports psychological assessments.

Aptitude Assessment

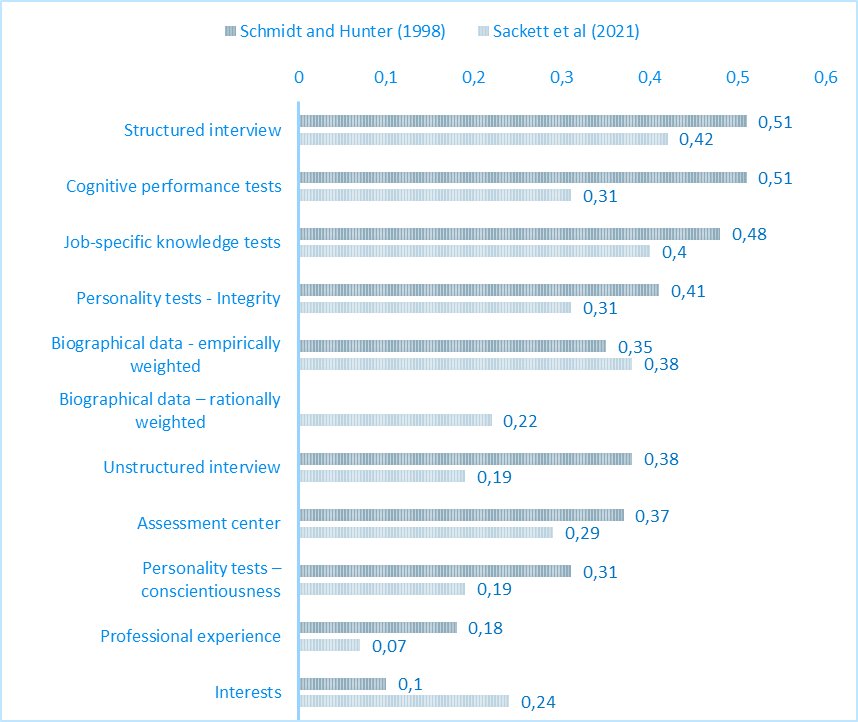

Although psychological tests cannot perfectly predict success in a particular job or training program, they can predict professional success with above-average accuracy, thereby enabling well-founded, objective selection decisions. Compared to other HR procedures, psychological tests have a high predictive power for professional success: A meta-analysis by Schmidt and Hunter (1998) analyzed the criterion validity of various aptitude testing procedures, including cognitive performance tests, personality tests, structured interviews, unstructured interviews, assessment centers, references, and graphology. It was found that when the procedures are used in isolation, cognitive performance tests and structured interviews have the highest predictive validity for predicting training and career success. However, the Schmidt-Hunter study also showed that predictive validity can be best increased by combining cognitive performance tests with a structured interview or personality tests. The authors therefore suggest using other methods to supplement cognitive performance tests. One advantage of cognitive performance tests over labor-intensive interviews is that psychometric methods can be used across positions and efficiently in group testing. In summary, it can be said that career success can best be predicted by combining various tests and other HR instruments. The results of the Schmidt-Hunter study have been confirmed by other independent meta-analyses (e.g., Bertua, Anderson & Salgado, 2005). Sackett et al. (2021) reviewed the original conclusions of Schmidt and Hunter (1998) in a recent revision, particularly with regard to the effects of statistical corrections for variance restriction of validity estimates. The authors concluded that these corrections for variance restriction present significant problems and that the validity of many procedures has been overestimated as a result. Even after methodological adjustments to the calculation of the respective validity parameters, selection procedures that ranked highly in earlier studies continue to rank highly. However, as can be seen in Figure 1 the mean validity estimates were reduced by 10 to 20 percentage points.

A recent study by Hambrick, Burgoyne, and Oswald (2024) confirms the continuing relevance of cognitive abilities for predicting job performance—regardless of work experience. Based on a large data set covering 31 different military occupations (N = 10,088), they examined the stability of the predictive validity of cognitive abilities across different levels of experience. While it is often argued that the influence of general cognitive abilities (g factor) decreases with increasing experience, the results show that g remains a significant predictor of job-specific performance even with high job experience. Although the predictive power of g tended to be slightly weaker in occupations with a high proportion of manual work, the predictive validity was consistently present across all military occupations examined. The authors conclude that many complex work tasks not only involve constant demands, but also variable challenges that require continuous adaptation and problem-solving skills—traits that are closely linked to cognitive performance. These results are consistent with previous meta-analyses, although Sackett et al. (2021) point out that earlier estimates of the validity of g may have been overestimated.

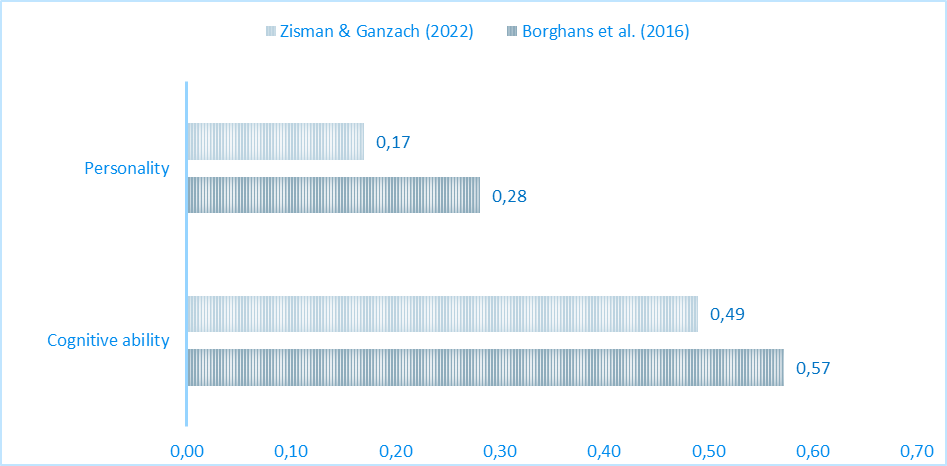

Cognitive performance tests can also be used to predict suitability for school and university education, teaching, and similar fields. Zisman and Ganzach (2022) provide current findings on educational success. In a replication of the study by Borghans et al. (2016), they examined the predictive power of cognitive performance tests and personality tests on educational success (Figure 2).

In the data sets they analyzed, the predictive power of intelligence in relation to academic and professional success was far higher than the predictive power of the Big Five personality dimensions. More detailed information on educational success can be found each training-related aptitude assessment (see: Education).

Since personality questionnaires are used as a selection criterion in addition to cognitive performance (g), the question arises as to which personality dimensions are the strongest predictors of success. A large meta-analysis with N = 413,074 participants examined the correlations (ρ) between the Big Five and academic success, which is a predictor of professional success, corrected for measurement errors (Mammadov, 2022). Since personality dimensions can correlate with g — which can lead to an overestimation of their influence — reported correlations were additionally adjusted for the effect of g. These were ρ = .16 for openness, ρ = .27 for conscientiousness, ρ = .01 for extraversion, ρ = .09 for agreeableness, and ρ = .02 for emotional stability. In summary, both extraversion and emotional stability showed negligible effects, while conscientiousness, openness, and potentially also agreeableness can be interpreted as valuable predictors. In addition, g continued to be the strongest predictor with ρ = .42.

Another recent study analyzed the average personality traits of 68,540 participants in 263 different occupational groups and showed that many occupations have distinct personality profiles (Anni et al., 2025). In general, the personality traits of the various occupational groups in the study were largely as expected. For example, jobs in advertising and sales showed a high average extraversion, while people in engineering professions tended to score low on this dimension. The study also referred to the "work styles" from the O*NET database (O*NET OnLine, n.d.). O*NET is one of the most important platforms for occupational information in the US; more details are provided on the page: Personnel Selection. The "work styles" are a collection of requirements that are considered relevant to performance and success in various occupations and are based on the assessments of experts and job holders. These include, for example, leadership orientation, self-control, and initiative. The study was able to show that the "work style" requirements of the individual occupational groups correlate with the observed Big Five personality dimensions. The strongest correlations were found between perseverance/stamina and openness (ρ = .59), leadership ability and conscientiousness (ρ = .25), self-control and extraversion (ρ = .50), and integrity/righteousness and emotional stability (ρ = .28). Agreeableness showed no significant correlations, while extraversion and openness showed significant effects with most of O*NET's dimensions (Anni et al., 2025).

In summary, it can be said that aptitude testing methods such as cognitive performance tests are among the most effective instruments for career and training-related aptitude testing due to their high criterion validity. Current research findings confirm the high relevance of cognitive abilities (g) as the strongest single predictor of training and career success. Among personality traits, conscientiousness and openness are important complementary predictors, while the other dimensions show only minor additional effects. The above-mentioned studies indicate that personality questionnaires are criterion-valid with regard to work style and career success and have an incremental predictive value compared to cognitive abilities. Overall, the available evidence underscores the central role of empirically based, standardized selection procedures for valid, fair, and efficient personnel decisions.

Clinical/neuropsychological assessments

In neuropsychological assessments, determining the level of neurocognitive functioning according to the definitions in the current manuals, ICD-11 or DSM-5, is necessary for the diagnosis and treatment planning of neurocognitive disorders such as dementia, as well as some developmental disorders such as ADHD (see: Clinical Psychology). For example, a meta-analysis with a total of 3,734 participants with and 2,969 without ADHD showed that executive functions are moderately impaired in ADHD (d or g ≈ 0.46–0.69), especially in inhibition, Vigilance, working memory, and planning—classic target constructs of STROOP, VIGIL, SPAN, and flexibility tasks such as the TMT-S (Willcutt et al., 2005).

Even in the clinical psychological diagnosis and therapy of disorders in which neurocognitive impairment is not a core symptom, determining the level of neurocognitive function can provide additional information. Psychological tests show robust group differences between healthy individuals and those with clinical diagnoses across numerous disorders—core evidence for their disorder-related criterion validity.

For example, meta-analyses comparing 9,048 patients with schizophrenia and 8,814 healthy individuals show a pronounced, generalized deterioration in performance (global mean Hedges' g ≈ −1.03), most pronounced in processing speed (g ≈ −1.25) and episodic memory (g ≈ −1.23). Tests such as the TMT-S (processing speed and cognitive flexibility) and SPAN (working memory) measure precisely those domains in which the greatest group assessment effects are found (Schaefer et al., 2013).

For affective disorders, meta-analyses showed that n = 784 individuals with major depression versus n= 727 healthy individuals had moderate deficits in executive functions, memory, and attention (Cohen's d ≈ −0.34 to −0.65); these differences persisted to a lesser extent even in remission (n = 168) – a strong indication that the test values reflect time-stable group differences and cannot be fully explained by temporary mood or motivation effects (Rock et al., 2013). In another meta-analysis consisting of 689 patients with bipolar disorder in euthymia and 721 individuals in the control group, medium to large effects (in some cases d ≥ -0.80) were found (even in the euthymic state), including in executive functions and verbal learning (Robinson et al., 2006).

In some cases, personality traits also show disorder-specific profiles: A meta-analysis (N = 30,036 to 33,054) of numerous anxiety, depression, and substance use disorders compared clinically conspicuous individuals with inconspicuous individuals (Kotov et al., 2010). The clinical sample showed lower emotional stability (mean d = -1.65) and conscientiousness (mean d = -1.01). Furthermore, personality traits prospectively predict neurocognitive diagnoses: higher neuroticism increased the risk of dementia (hazard ratio (HR) = 1.24), while higher conscientiousness reduced it (HR = 0.77; Aschwanden et al., 2021). Personality traits are not included as standard in SFS Solutions for the clinical and neuro field, but can be recorded for specific assessment questions using the FCB5 personality questionnaire, for example.

In summary, meta-analyses across different disorders, domains, and instruments show consistent group differences between clinically diagnosed individuals and healthy control samples. Thus, criterion validity with regard to clinical status can be interpreted as given for the aforementioned ability and personality constructs.

Sports psychology assessments

Psychological tests show consistent correlations with athletic performance—core evidence for their criterion validity. A large meta-analysis (N = 8,860) found that more skilled athletes perform better on cognitive tests than less skilled athletes (total Hedges' g = 0.59, 95% CI [0.49; 0.69]). The effects are particularly pronounced in decision-making tasks (g = 0.77) and sport-specific tasks (Kalén et al., 2021). In another meta-analysis of 1,410 athletes from 17 studies, professionals across all sports showed higher cognitive performance than amateurs (r = 0.22; Scharfen et al., 2019). This supports the validity of domain-general tests such as TMT-S (processing speed), SPAN (working memory), and STROOP (interference control) for differentiating athletic performance levels. In addition, a meta-analysis by Liu et al. (2024) with 1,453 participants in multiple object tracking showed clear advantages for athletes over non-athletes (g = 0.56) and for experts over novices (g = 0.92). These results support the criterion validity of tests that address overview acquisition and reactive stress tolerance (e.g., ATAVT-2, DT, RT).

Personality also contributes to athletic performance: Big Five factors and athletic performance are significantly positively correlated, especially in the dimensions of conscientiousness (r = 0.178) and extraversion (r = 0.145) (Yang et al., 2024). This effect remains consistent in both team and individual sports.

In summary, meta-analytic evidence shows medium to large effects of cognitive performance and small but statistically significant correlations between personality and athletic performance. Taken together, these findings can be interpreted as criterion validity of the tests used in the context of sports psychology.

Fairness

Test fairness describes the extent to which the values resulting from a test do not lead to systematic discrimination against certain test takers. Systematic discrimination can arise, for example, on the basis of ethnic, sociocultural, or gender-specific affiliation (Kubinger, 2019).

Test fairness can refer not only to the content of the test items, but in principle to all aspects of a test procedure – from its construction and implementation to its evaluation. An overarching understanding of test fairness therefore involves equal treatment of all test takers in terms of test conditions, access to practice materials, feedback, and other aspects of test administration.

The Vienna Test System contributes to fairness by enabling a standardized test experience and test administrator independence. The fairness of the test items is examined for each individual test in the Vienna Test System with regard to gender, age, and educational level and reported in the respective test manuals. Since the Vienna Test System is designed for worldwide use, fairness across different cultures also plays a central role, which is explicitly taken into account in test construction and development.

Economy

Economy is understood as how resource-efficient a test is in comparison to the information gained (Kubinger, 2019). Compared to other instruments (e.g., assessment centers/work samples), psychological tests are usually much more economical. Computer tests are particularly economical because the test is administered in computerized form, the evaluation and reporting are automated, data management is simple, and group testing is possible.

Cost-benefit analyses show that the use of tests in personnel selection can massively increase the hit rate for suitable candidates – while simultaneously increasing productivity and reducing bad investments. The costs saved in this way mean that the investment in purchasing the tests pays for itself within a very short time.

References can be found here: Literature